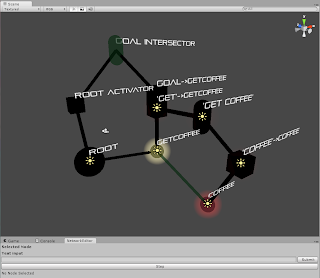

First, my latest update, in picture form. I have intersectors working, which are integral to the operation of the algorithm. In this picture, only the spherical nodes were created by me. There's the Root node, the yellow action node GetCoffee, and the red concept node Coffee. The rest are generated from the text input "Get coffee". The cubes are Activators - the Root Activator, the Goal Activator, and two Search Activators - one for "Get" and one for "Coffee". The Search Activations are red (the one for getcoffee is hidden by the yellow Goal Activation). Finally, there is the Query Intersector "Get Coffee" which is finding the intersection of the "get" and "coffee" intersections, and the Goal Intersector, which finds the path between the Root node and the Goal node.

First, my latest update, in picture form. I have intersectors working, which are integral to the operation of the algorithm. In this picture, only the spherical nodes were created by me. There's the Root node, the yellow action node GetCoffee, and the red concept node Coffee. The rest are generated from the text input "Get coffee". The cubes are Activators - the Root Activator, the Goal Activator, and two Search Activators - one for "Get" and one for "Coffee". The Search Activations are red (the one for getcoffee is hidden by the yellow Goal Activation). Finally, there is the Query Intersector "Get Coffee" which is finding the intersection of the "get" and "coffee" intersections, and the Goal Intersector, which finds the path between the Root node and the Goal node.The "Step" button at the bottom should go through the procedure to get to the goal node, but there's a small bug in it right now. (I would fix it, but I'm leaving for Pittsburgh tomorrow afternoon and might not have time to make this post later).

Now the "Self-Evaluation". I'm really happy to have the Unity interface up to my original back-end progress now. It seemed a little slow, but I think this interface will help me find a lot of bugs more quickly. It has already helped me see some errors. When dealing with graph networks like this, it can be very difficult to catch small things like missing edges. And while it has taken away some time that I would have liked to work on the underlying system, I think it is both an innovative and useful tool for this application - I don't know if I've seen any visualization of cognitive models before, let alone a nice looking one like this.

I'll have to scale back some of my previous goals, but for the Beta review, I plan to have a small test environment connected to the network. A simple case would be a cube that could do something like jump, given a user's input saying "jump". If I have my Beta review on the 1st, then getting this working and making the interface more robust would be my two main goals. (Since I'll be at Carnegie Mellon this weekend I don't foresee getting much work done). If it's on the 4th, I'd like to have a more complicated environment (maybe multiple objects).

By the Final Poster session, I plan to have an environment where an agent has multiple actions available to it, and can do things like "pick up the blue box" or "walk to the green sphere". Being able to take in information from the user and store relationships would be a nice added touch. Other related dates would probably be any cool interesting features I can add. In general, however, I expect the major functionality to be done by the presentation date.

Also, Joe wanted me to submit a paper to IVA 2011, and that deadline is April 26th. I'm not sure how compelling my system can be by that point, but we'll see how it goes. I may focus more on the benefits of a visualization system for a language interface (since the theme of IVA 2011 is language).

To be fair, I just think that this might be interesting ... don't feel like IVA makes or breaks your project, I was just trying to give you some ideas and goals to shoot for since your going on to do your Phd.

ReplyDeleteI like that you have the visualization up and running well.